Crafting the Agentic Harness

Build Your Own AI Agent

Sandip Wane · Applied AI at CloudHedge

What this session is about

- Understand the agent loop

How LLMs become agents: the think → act → observe cycle

- Build an agentic harness from scratch

Bun + Vercel AI SDK + AWS Bedrock. No frameworks, just code

- Give your agent tools

File I/O, shell access, sub-agents: the building blocks of a coding agent

- Add memory & context

System prompts, message history, and stateful conversations

- Walk away with a working agent

A coding assistant you can extend, customize, and ship

Not covered in this session

- Evals

Measuring agent quality, accuracy, and reliability

- RAG

Retrieval-augmented generation and vector search

- MCP Client

Model Context Protocol for connecting to external tools

Assumptions

- Bun / Node.js

Runtime for all demos. Node.js works too

- Vercel AI SDK

Unified interface for model calls, tools, and streaming

- AWS Bedrock

Model provider. Swap for OpenAI/Anthropic API if you prefer

- Mixed experience levels

No single "right" background. We will build up from first principles

- Ask questions anytime

This talk assumes familiarity with several concepts. Interrupt if anything is unclear

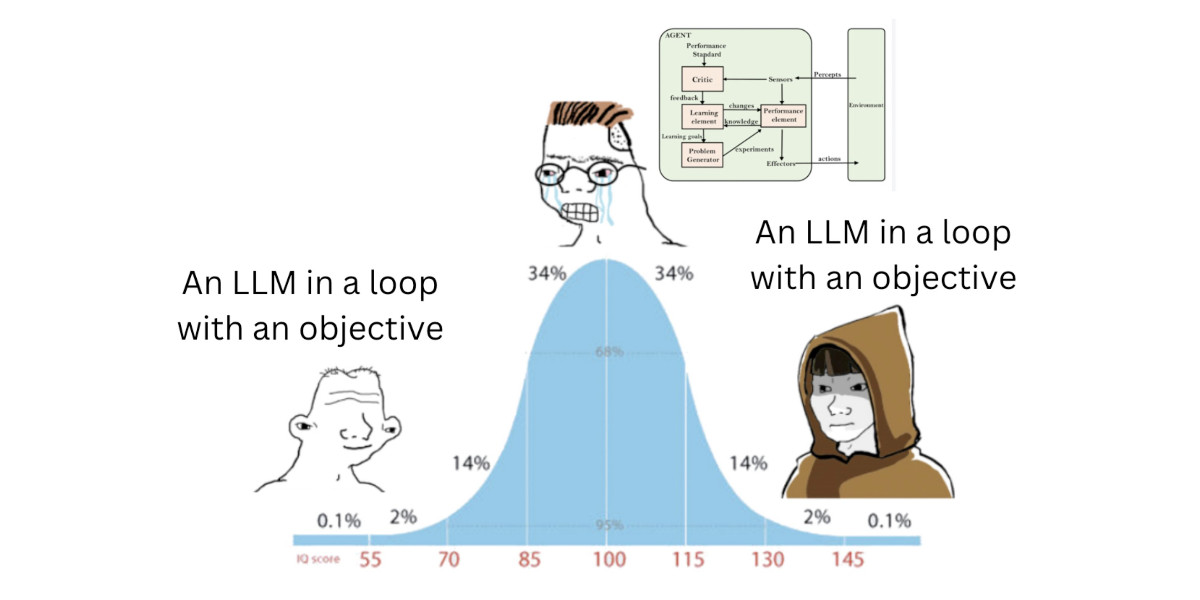

What is an agent?

LLM + Tools + Loop + Goal = Agent

- LLM Reads context, picks the next action

- Tools Functions the LLM can call: read files, run code, hit APIs

- Loop Call tool, get result, decide next step, repeat

- Goal A stopping point so the loop knows when to end

An AI agent is a system combining an LLM with tools, capable of reasoning in a loop, making decisions, and acting on its environment to pursue a goal autonomously.

Anthropic's Take

Anthropic splits "agentic systems" into workflows (LLM follows predefined code paths) and agents (LLM decides its own next step). The difference: who controls execution.

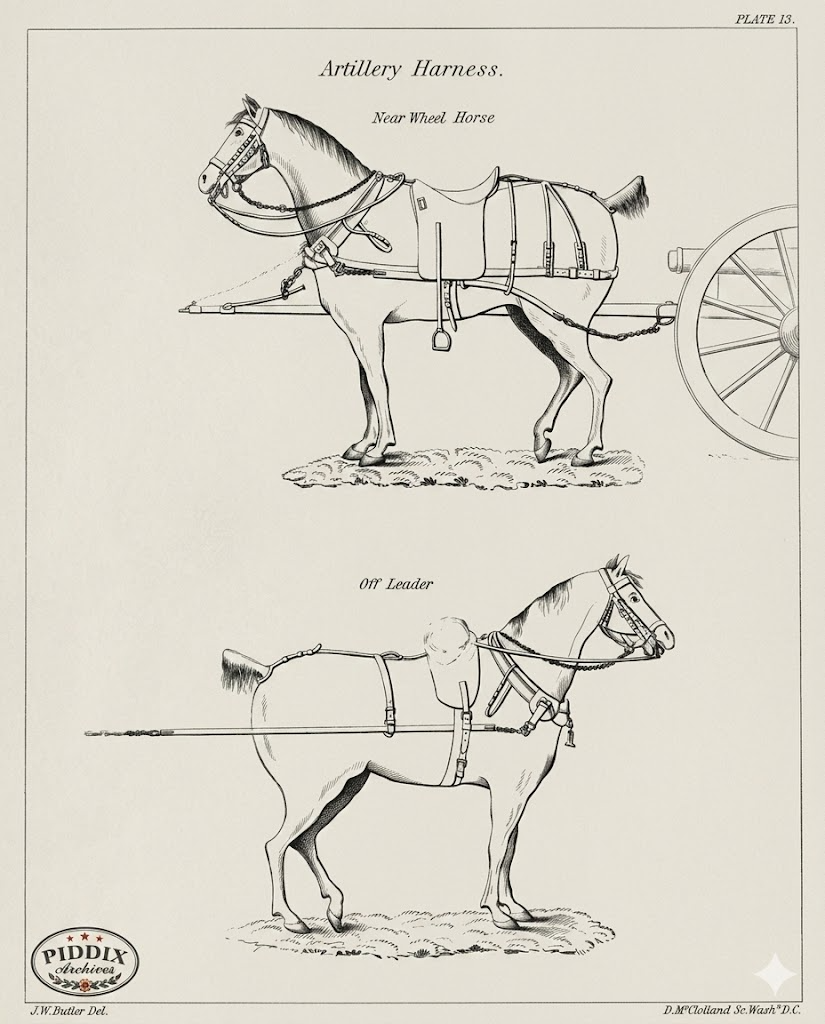

What is a harness?

A harness wraps an LLM with tools, memory, and a control loop so it can work toward a goal.

Horse Analogy

- LLM A wild horse: raw power, no direction

- Harness Reins, saddle, and a path to follow

- Without The LLM just generates text

- With The LLM acts, observes, and achieves goals

Best examples of LLM harnesses

The Agent Loop

flowchart TD User(["User Prompt"]) --> Setup["Setup

system prompt + tools + messages"] Setup --> LLM["Call LLM

streamText"] LLM --> Decide{{"Tool calls?"}} Decide -->|Yes| Exec["Execute Tools

append results to messages"] Exec --> LLM Decide -->|No| Done(["Return Response"]) classDef start fill:#2ea043,stroke:#238636,color:#fff classDef process fill:#1f6feb,stroke:#1158c7,color:#fff classDef decision fill:#d29922,stroke:#b38600,color:#fff classDef action fill:#8b5cf6,stroke:#7c3aed,color:#fff classDef finish fill:#2ea043,stroke:#238636,color:#fff class User start class Setup process class LLM process class Decide decision class Exec action class Done finish

Demo · LLM call, no memory

const bedrock = createAmazonBedrock({ region: "us-east-1" });

const model = bedrock("us.anthropic.claude-opus-4-6-v1");

async function ask(prompt: string) {

const { text } = await generateText({ model, prompt });

return text;

}

Each ask() call sends only the current prompt. No history, no context, no memory.

=== Forgetful Claude === Each turn is independent. > My name is Sandip. Hi Sandip! Nice to meet you. > What's my name? I don't have any information about your name...

Claude forgets the name between turns. No memory lives in the model.

Memory isn't a model feature. It's something the harness assembles by replaying past turns on every call.

Demo · LLM call with session memory

See demo

Message structure & turn markers

messages: ModelMessage[] = [ { role, content } ] role is the turn marker. content is the text. The harness owns this list, the model only reads it.

The list grows every turn

Each turn the harness appends two messages, then resends the whole array. The model never mutates the list.

What is a coding agent?

Coding Agent = Agent + Code Tools + Repo Context + Code Goal

- Agent LLM + Tools + Loop + Goal, as defined earlier

- Code Tools Bash, Read, Edit, Grep, Glob, read and change the repo

- Context A repo, a working directory, a set of files

- Goal Ship a change, fix a bug, add a feature

Same loop, same message shape. What makes it coding is the toolbox and the working directory.

What is a tool?

A tool is a function the LLM can decide to call.

The harness registers it, the model requests it, the harness runs it,

the result flows back as a tool message.

- Name Stable identifier the model calls by

- Description Plain English doc that teaches the model when to use it

- Input Schema Zod or JSON schema that validates arguments

- Execute The actual function your harness runs locally

tool messageThe model never runs code. It only asks. Your harness decides whether and how to execute.

First tool · bash

The single most powerful tool. One shell, and the model can reach the whole OS.

- One tool replaces dozens:

ls,grep,find,curl,git - Model picks the right Unix command for the goal

- Returns

stdoutandstderras the tool result - Full shell access. Gate it with a permission prompt in production

import { tool } from 'ai';

import { z } from 'zod';

import { exec } from 'node:child_process';

import { promisify } from 'node:util';

const run = promisify(exec);

export const bash = tool({

description: 'Run a shell command on the local machine and return stdout / stderr.',

inputSchema: z.object({

command: z.string().describe('The shell command to execute'),

}),

execute: async ({ command }) => {

const { stdout, stderr } = await run(command);

return { stdout, stderr };

},

});File read · write · apply diff

Three sibling tools let the agent perceive and mutate the repo.

pathpath, contentpath, old_string, new_string- Read before write. The model sees what it is changing

- Line numbers help reasoning. The model can point to a location

- Edit is safer than write. Only the matched slice changes

- Apply diff pattern. Avoids regenerating the whole file on every change

import { tool } from 'ai';

import { z } from 'zod';

import { readFile, writeFile } from 'node:fs/promises';

export const read = tool({

description: 'Read a file. Returns content with line numbers.',

inputSchema: z.object({ path: z.string() }),

execute: async ({ path }) => {

const text = await readFile(path, 'utf8');

return text.split('\n')

.map((line, i) => `${i + 1}\t${line}`)

.join('\n');

},

});

export const write = tool({

description: 'Create a new file or overwrite an existing one.',

inputSchema: z.object({ path: z.string(), content: z.string() }),

execute: async ({ path, content }) => {

await writeFile(path, content, 'utf8');

return { ok: true, path };

},

});

export const edit = tool({

description: 'Apply a diff: replace one exact slice of a file.',

inputSchema: z.object({

path: z.string(),

old_string: z.string(),

new_string: z.string(),

}),

execute: async ({ path, old_string, new_string }) => {

const text = await readFile(path, 'utf8');

if (!text.includes(old_string)) throw new Error('old_string not found');

await writeFile(path, text.replace(old_string, new_string), 'utf8');

return { ok: true };

},

});Subagents · the Task tool

See demo

Skill tool

See demo

Resources

- How to build an agent

ampcode.com

- MIT lecture on agentic coding

youtube.com

- How to build a multi-turn AI agent

cloudhedge.io · by Sandip Wane

- Vercel AI SDK official tutorial

vercel.com/academy

- Defining "Agent"

simonwillison.net

Thank you

Now go build your own agent

Sandip Wane · Applied AI at CloudHedge